“Generative Fill,” a new Photoshop beta tool that uses cloud-based image synthesis to fill in specific areas of an image with new AI-generated content based on a text description, was added by Adobe on Tuesday. Controlled by Adobe Firefly, Generative Fill works in basically the same manner to a procedure called “inpainting” utilized in DALL-E and Stable Dispersion discharges since the year before.

At the center of Generative Fill is Adobe Firefly, which is Adobe’s custom picture blend model. Firefly, an AI model based on deep learning, has been trained on millions of images from Adobe’s stock library to link specific images to text descriptions. People can now type in what they want to see (for example, “a clown on a computer monitor”) and Firefly will generate a number of options from which they can choose. This feature is now included in Photoshop. Generative Fill creates a context-aware generation capable of seamlessly blending synthesized imagery into an existing image by employing a well-known AI technique known as “inpainting.”

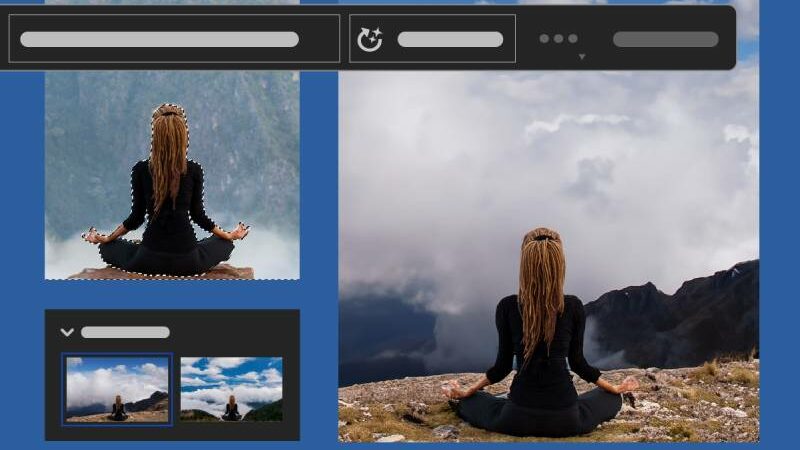

To utilize Generative Fill, clients select a region of a current picture they need to adjust. A “Contextual Task Bar” appears after being selected, allowing users to enter a description of what they want to see generated in the selected area. After processing the data, Photoshop returns the results to the application. The user can choose from a variety of generations after generating, or they can create additional options to look through.

At the point when utilized, the Generative Fill device makes a new “Generative Layer,” taking into consideration non-horrendous changes of picture content, like increments, expansions, or evacuations, driven by these text prompts. It automatically adjusts to the chosen image’s perspective, lighting, and style.

The Photoshop beta includes more AI-powered features than just Generative Fill. Firefly has also made it possible for Photoshop to “outpainting,” an AI technique for completely removing parts of an image, erasing objects from a scene, or extending the dimensions of an image by generating new content that surrounds the existing image.

These highlights have been accessible in OpenAI’s DALL-E 2 picture generator and proofreader since August of last year (and in different homemade libation arrivals of Stable Dispersion since around a similar time), so Adobe is quite recently getting up to speed to adding them to its leader picture supervisor. It is true that it is a quick turnaround for a business that may have a significant liability target on its back regarding issues such as the production of harmful or socially stigmatized content, the use of artist images for training data, and the powering of propaganda or disinformation.

In this way, Adobe uses terms of service to prevent users from creating “abusive, illegal, or confidential” content in addition to preventing certain copyrighted, violent, and sexual keywords from being used.

Likewise, with Generative Fill’s capacity to handily twist the obvious media truth of a photograph (in fact, something Photoshop has been doing since its commencement), Adobe is multiplying down on its Substance Genuineness Drive, which utilizations Content Qualifications to add metadata to created records that assist with following their provenance.

Through the “Beta apps” section of the Creative Cloud app, Generative Fill in the Photoshop beta app is currently accessible to all Creative Cloud members who have a Photoshop subscription or trial. It is not yet available for commercial use, is not available to people under the age of 18, is not available in China, and currently supports text prompts that are only available in English. Adobe intends to make Generative Fill accessible to all Photoshop clients before the year’s over so anybody can make yard jokesters effortlessly.

Generative Fill can be tried for free on the Adobe Firefly website with an Adobe login using a web-based tool if you don’t have a Creative Cloud subscription. The Firefly beta waitlist was recently removed by Adobe.

- Top 5 Cryptocurrencies to Recoup Your Losses After Bitcoin Halving - April 23, 2024

- Top 5 Technology Stocks to Purchase Before to May 2024 - April 23, 2024

- The Top 5 Most Stunning Airport Designs in the World - April 23, 2024